A Future Battle For Compute

Artificial superintelligence is, by definition, artificial intelligence that far exceeds human intelligence. If artificial superintelligence one day becomes a reality, we will find ourselves far too inferior to protect ourselves from it intellectually. Instead, we will have to employ the help of another artificial superintelligent agent. In artificial intelligence, an intelligent agent (IA) is anything which perceives its environment, takes actions autonomously in order to achieve goals, and may improve its performance with learning or may use knowledge. They may be simple or complex — a thermostat is considered an example of an intelligent agent, as is a human being

This scenario sets the scene for a sort of artificial intelligence ‘cold war’, which on the internet will stage itself as a perpetual battle between AI-developed security systems and AI-developed hacking infiltration systems.

|

|---|

| Artificial Intelligence Cold War [StableDiffusion] |

The AI agents on both sides are likely to be very similar in their technical abilities. Both agents need knowledge of how to find vulnerabilities and how they can be exploited. The difference would come in the instructions that we humans give them.

However, it is unlikely that these two agents would actually be the same. Instead, security companies will develop their agents, which they will keep for use only by themselves to keep their methods and secrets hidden. In response, hackers will likely do the same.

The more compute and data you can access, the bigger and better the agent you can train. It is therefore vital for each side to obtain as much computing power and as much data as possible.

If the security agent is more potent (assuming the security agent knows everything the hacking agent knows and more), then given an unlimited time, the hacking agent will not be able to break in. And in the case where the hacking agent is more potent, the opposite is true.

In the above example, we would prefer it if the security agent was more potent than the hacking one. If it isn’t, then none of the internet can be trusted, rendering it useless for many things, especially e-commerce. So, how can we ensure that the security agent is more potent?

Regulation

As we have already discussed, the two factors of agent intelligence are compute and data. Unfortunately, data is difficult to control, with much of it being readily accessible (internet, books, etc.), and with the future possibility of much of the data being AI-generated itself. So if we can’t control data, let’s look toward computing.

The amount of compute needed to train current-day models is already very high. For example, OpenAI’s GPT-3 (175M) cost 3.14E23 FLOPs to train, which may have cost them around $5 million. With this much compute needed, it’s no surprise that these models are trained on highly specialised machine learning GPUs, such as Nvidia’s Tesla V100.

Governments or other regulating bodies could restrict how much compute organisations can own. Restrictions would prevent organisations that do not meet set criteria from purchasing more than a set amount. This would be similar to how some countries restrict the amount of land an organisation can own to prevent large organisations from having too much control. The idea here is that preventing organisations from having too much compute could help prevent bad actors from getting as much access to compute as security companies do.

However, it is essential to note that such a policy could have unintended consequences, and it must be carefully considered before implementation. For example, a black market for compute may emerge, which bad actors could use to obtain the compute they need. Restrictions might also incentivise the creation of botnets, in which consumers’ hardware is unknowingly hijacked and utilised. However, perhaps the growing trend of cloud computing will make botnets obsolete.

A ceiling for intelligence?

All of this is based on the assumption that intelligence will continue to grow by increasing compute and data abundance. And will it always be true that a more intelligent AI consistently outperforms a less intelligent AI?

Let us take, for example, a seemingly trivial task like colour recognition. An agent that can correctly identify all colours at 5,000 parameters will be no worse than one that can do it at 1M parameters. We can recognise in this case that AI agents will not be able to improve their output with further training. This is because there are set truths that the agent must learn (yellow is yellow, blue is blue, etc.) and nothing more beyond that. Therefore, if a smaller agents output is already true, then a larger model will not be able to improve upon it.

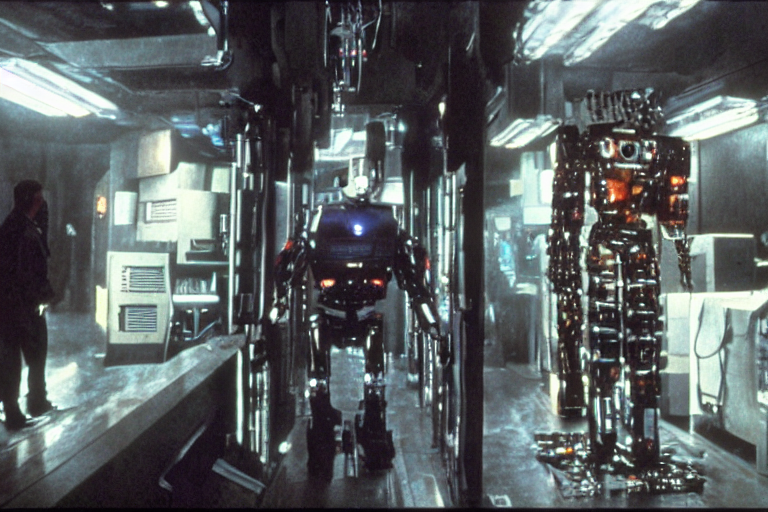

|

|---|

| Humanoid robot factory [StableDiffusion] |

Now take a more difficult task, such as building an impenetrable security system. But, despite being a more complex problem, what if there are set truths here too? It may be too complex for us as humans to see, but perhaps not for a machine. If set truths exist for this example too, there must also be a point where no matter how much training the agent is given, it cannot improve upon its output.

Might this be the case for all conceivable problems? If yes, is it physically possible to build an agent large enough to be able to compute all truths? What about something like the stock market which seems infinitely complex? Perhaps it is made up of thousands, or millions of set truths? And does information theory tell us that everything can be broken down into bits and therefore simple set truths?

To conclude, artificial super-intelligence is likely to reach a level of intelligence where further training can no longer improve upon mundane tasks, such as programming. Unfortunately, we cannot tell how long it will take to get to this level. It could be years, decades, or even centuries, and it is also not known where the limit of intelligence on such tasks lies or if a limit even exists in the first place.